Deployment Age #13: GPT-3

GPT-3, Projects & Demos, Implications, Use-cases, Interesting Reading

Hi there,

I hope you and your family are healthy and well.

I’ve recently been thinking about the uses and implications of OpenAI’s latest wunder-model, GPT-3.

I’ve also published a couple of short thoughts on Innovation Management:

GPT-3

OpenAI’s GPT-3 is the world’s most sophisticated natural language technology. It’s the latest and greatest text-generating neural network. And it has the Twittersphere abuzz.

I want to speak about the implications of the latest hype. But first, a short description of the beast itself.

While the vast majority of AI systems are designed for one use-case and tightly trained for that, GPT-3 is the latest in a lineage of natural language processing technologies know as Transformers that are far more generalizable.

In effect, Transformers process massive amounts of text to learn some general attributes of language (pre-training). This knowledge is then used as a good starting point for finessing specific language understanding tasks (fine-tuning). In doing so, it dramatically reduces the amount of labelled training data required for specific natural language tasks.

So what’s new? The main difference between GPT-3 and its predecessor, GPT-2, is its size.

Size matters.

In AI, size especially matters. Peter Norvig continues to be correct, only more so - “Quantity has a quality all of its own”. Specifically, it’s bigness (175B parameters) is directly related to its effectiveness (mean human accuracy of detecting if an article was AI-generated was ~52%).

More concretely:

Language model performance scales as a power-law of model size, dataset size, and the amount of computation.

A language model trained on enough data can solve NLP tasks that it has never encountered. In other words, GPT-3 studies the model as a general solution for many downstream jobs without fine-tuning.

GPT-3 Projects & Demos

Two days ago, Twitter lit up with interesting and excellent demos and projects built on top of GPT-3. Here are a few that stood out, and should give you a good flavour of what’s possible.

Word

Sushant Kumar has created this micro-site that takes a word and outputs a GPT-3 generated sentence based on that. Replace ‘word’ at the end of the following web address to see GPT-3 strut its stuff: https://thoughts.sushant-kumar.com/word

Here are some examples, only slightly cherry-picked.

GPT-3 on Progress. “Civilization rose on the exponential curve. We shouldn’t expect progress to follow a straight line.”

GPT-3 on Technology. “Technology optimizes systems. People optimize culture.”

GPT-3 on Deep Learning. “M&A for deep-learning research would be like chocolate goodies for Santa.”

GPT-3 on Blockchain. “All cryptocurrency is fraud. It is backed by nothing real and it is made out of thin air (except the electricity used).”

GPT-3 on Design. “Mistakes are bad, but deleting mistakes is worse.”

GPT-3 on UX. “User experience is first and foremost for the users' experience.”

Code

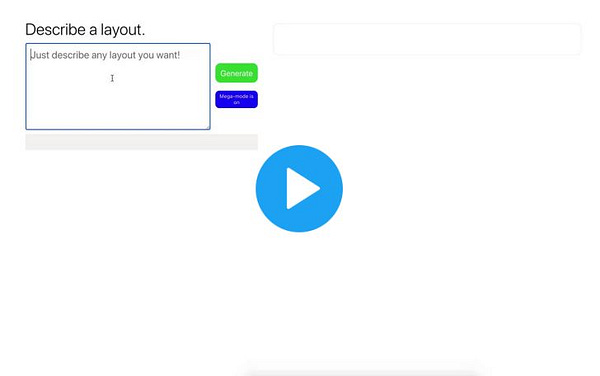

Sharif Shameem at debuild.co has built a few really exciting demos. Just describe what your app should do in plain English, then start using it within seconds.

He has whipped up demos for:

Turing Test

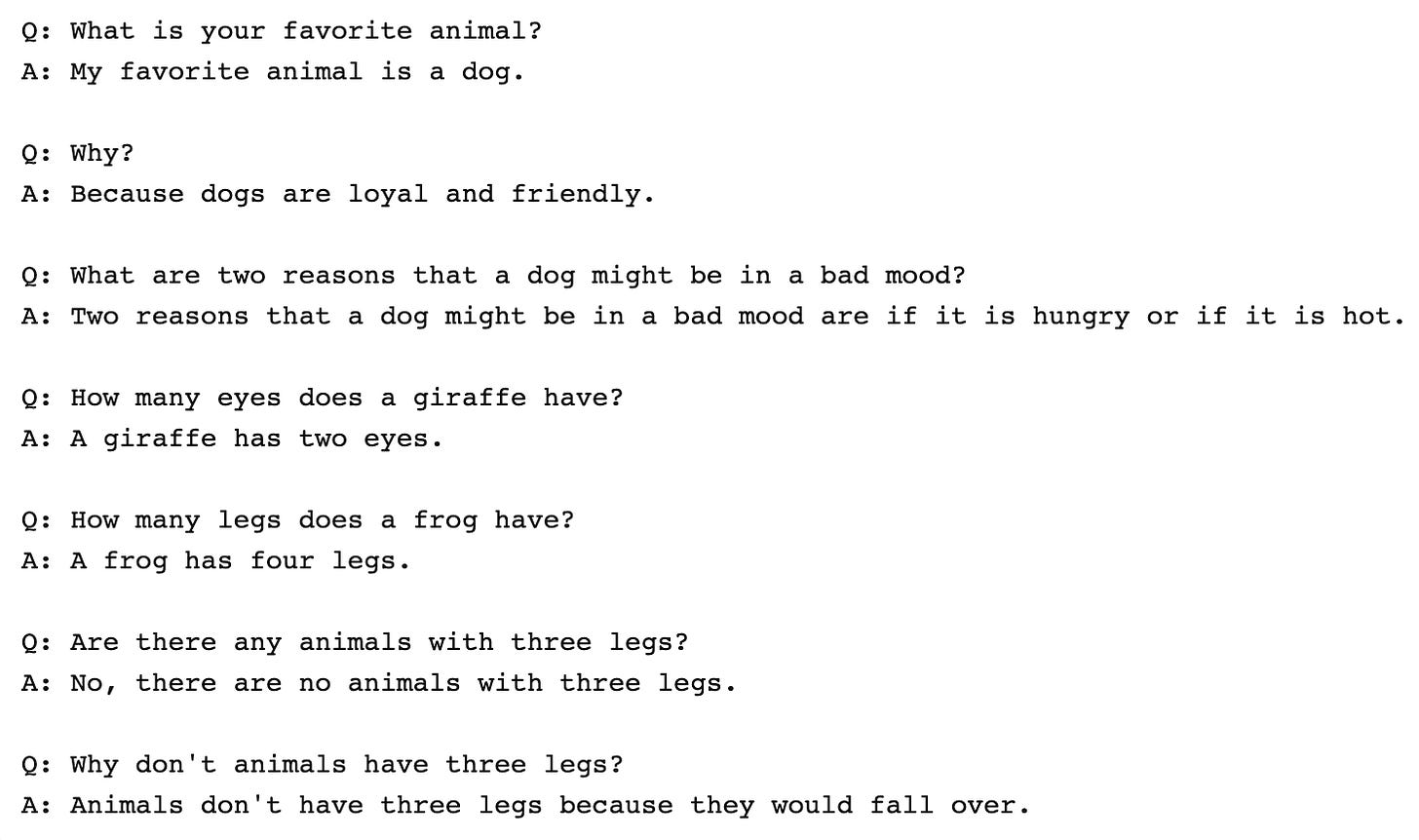

Kevin Lacker has sat GPT-3 down and given it the Turing Test. Kevin tests for Common Sense, Trivia and Logic. His conclusion: “GPT-3 is quite impressive in some areas, and still clearly subhuman in others.” A bit like myself.

Traditionally, artificial intelligence struggles at “common sense”. Here’s GPT-3 answering a lot of common sense questions.

Conversations

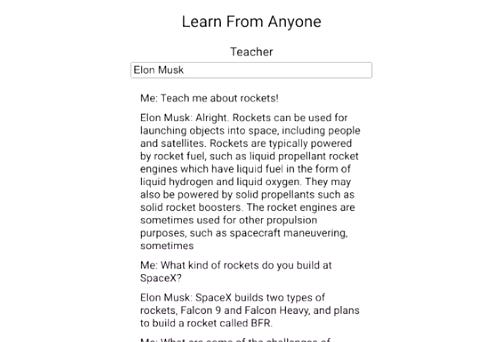

Mckay Wrigley designed an app that uses GPT-3 to allow you to ‘learn from anyone’. Ever wanted to learn about rockets from Elon Musk? How to write better from Shakespeare? Philosophy from Aristotle?

At the time of posting, learnfromanyone was down but should shortly be back up and running.

Design

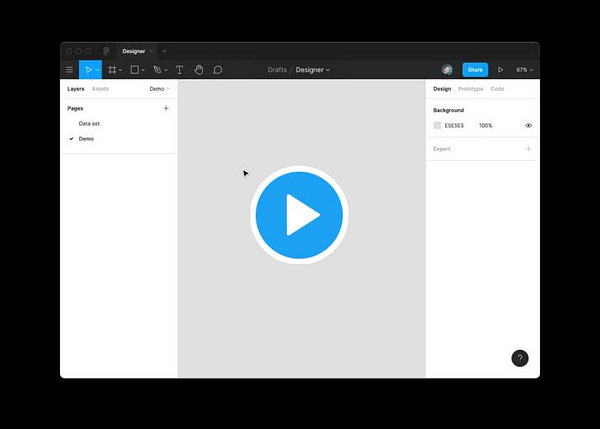

Jordan Singer has built a plugin for Figma that uses GPT-3 to design with/for you. The plugin talks to Figma to say things like "add a blue square" or "give me a pink circle that's 500px"

Jordan made a basic representation of the Figma canvas which GPT-3 generates after providing a few raw text examples. That representation is then translated into Figma plugin code to build a screen.

Summarisation

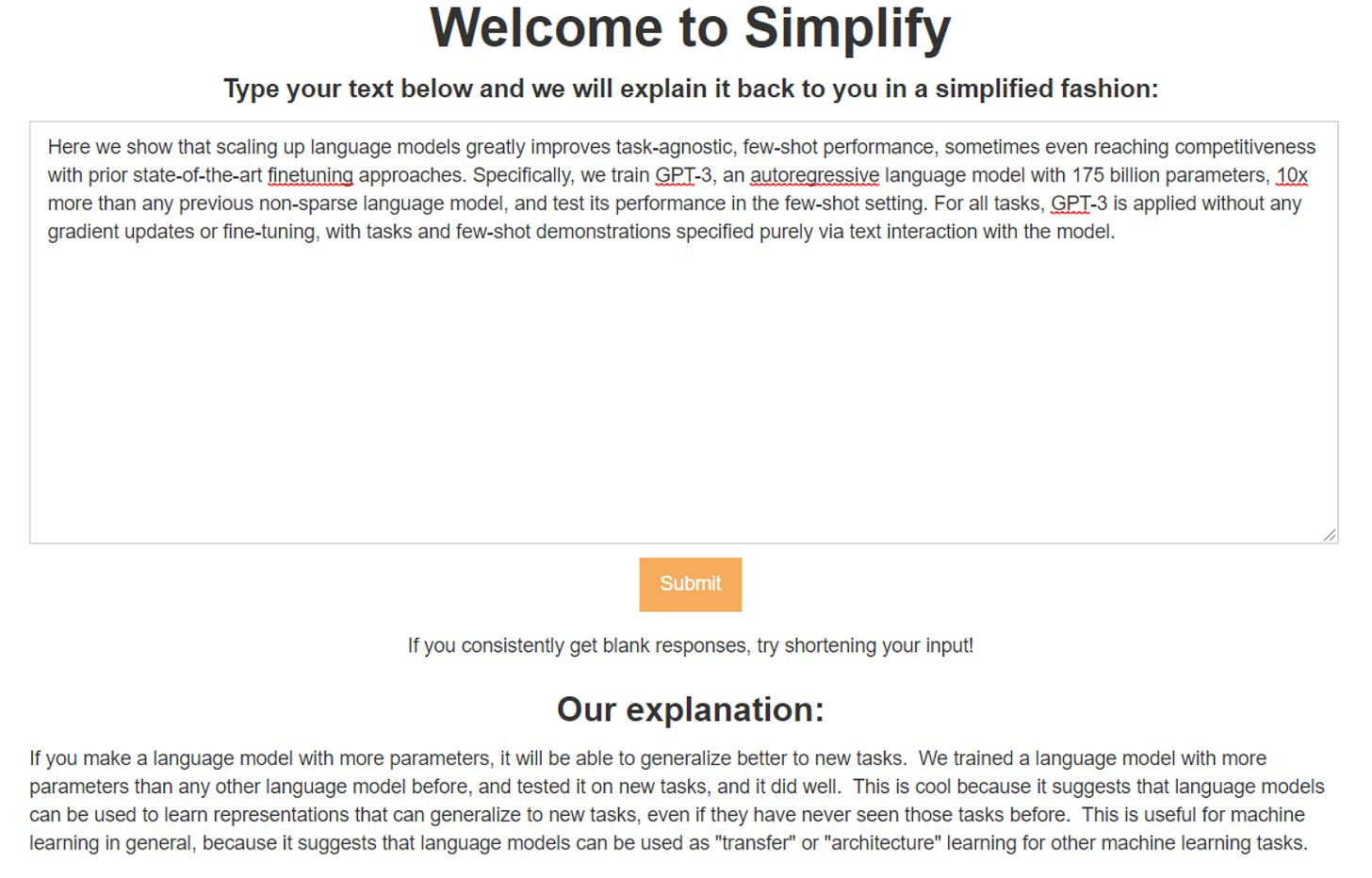

Chris Lu built an Explain Like I’m 5 website using GPT-3.

Ideation

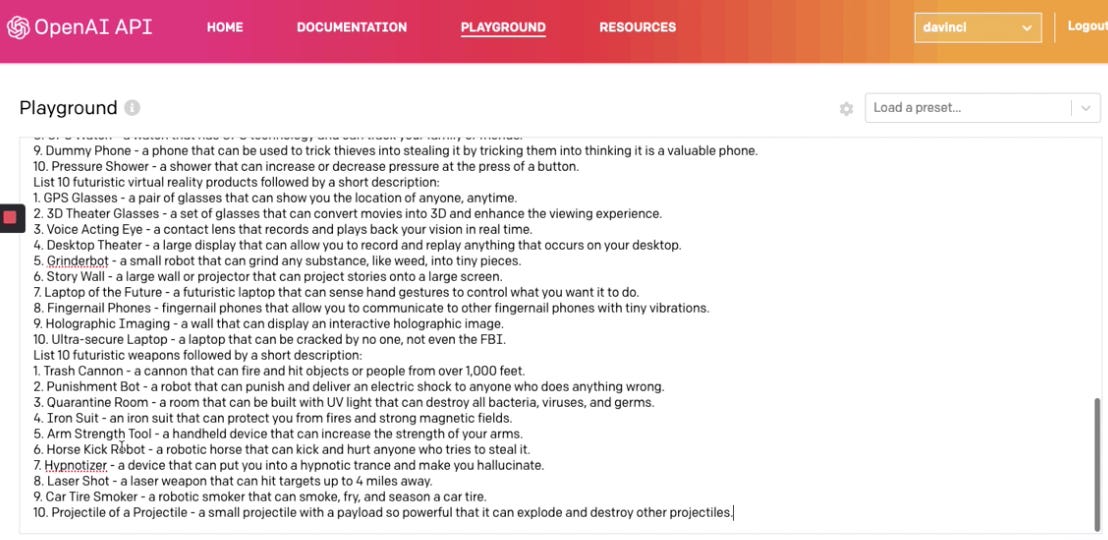

Paul Yacoubian uses GPT-3 seed primer to generate infinite ideas.

GPT-3 Implications

GPT-3 is an inflection point for natural language processing. But not because it’s a great conceptual leap forward.

GPT-3 feels different. The range of demos attest to that. It has poured burning fuel on a flammable hype factory. A factory that’s almost religiously primed to latch on to any promise of computers appearing to act intelligently.

Why?

For a start, it’s better than the previous generation of models. It’s passed some invisible threshold to being obviously fun and useful for a newbie from GPT-2 which required more time invested by the user (e.g. Gwern).

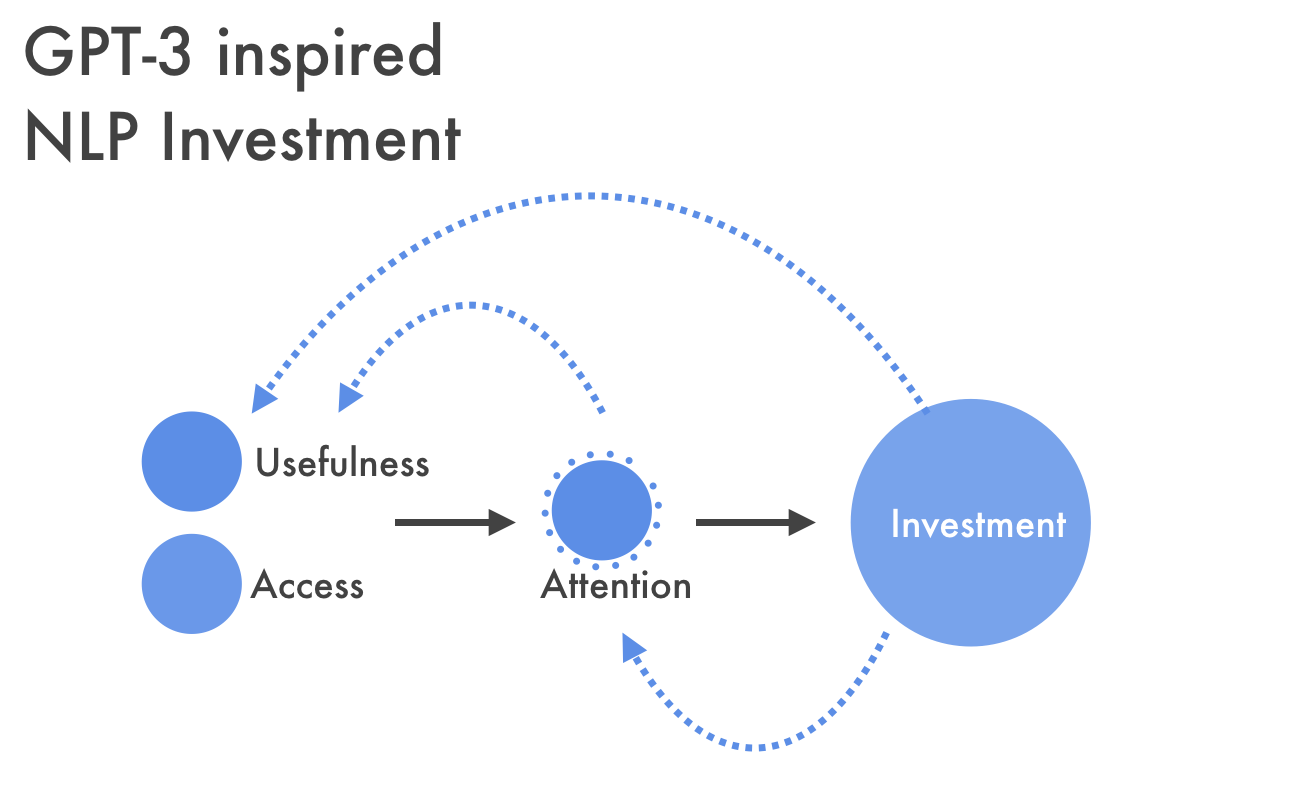

It’s also highly accessible. Opening access via the beta API has led to a furious spread of demos and projects - and this brings the potential of the technology to life. Tangibility is important.

On top of that, you can interact with GPT-3 via English. It requires only a minimal amount of specific knowledge. The profiles of those creating the demos and cheering them on from the sidelines is different than before. It’s not just AI practitioners and trend-watchers. It’s hackers.

My prediction is that the primary legacy of GPT-3 will be a dramatic expansion of who will try new things with AI and what they’ll be inspired to try with it.

This may well be the Homebrew Computer Club for the newest generation of NLP.

So what next?

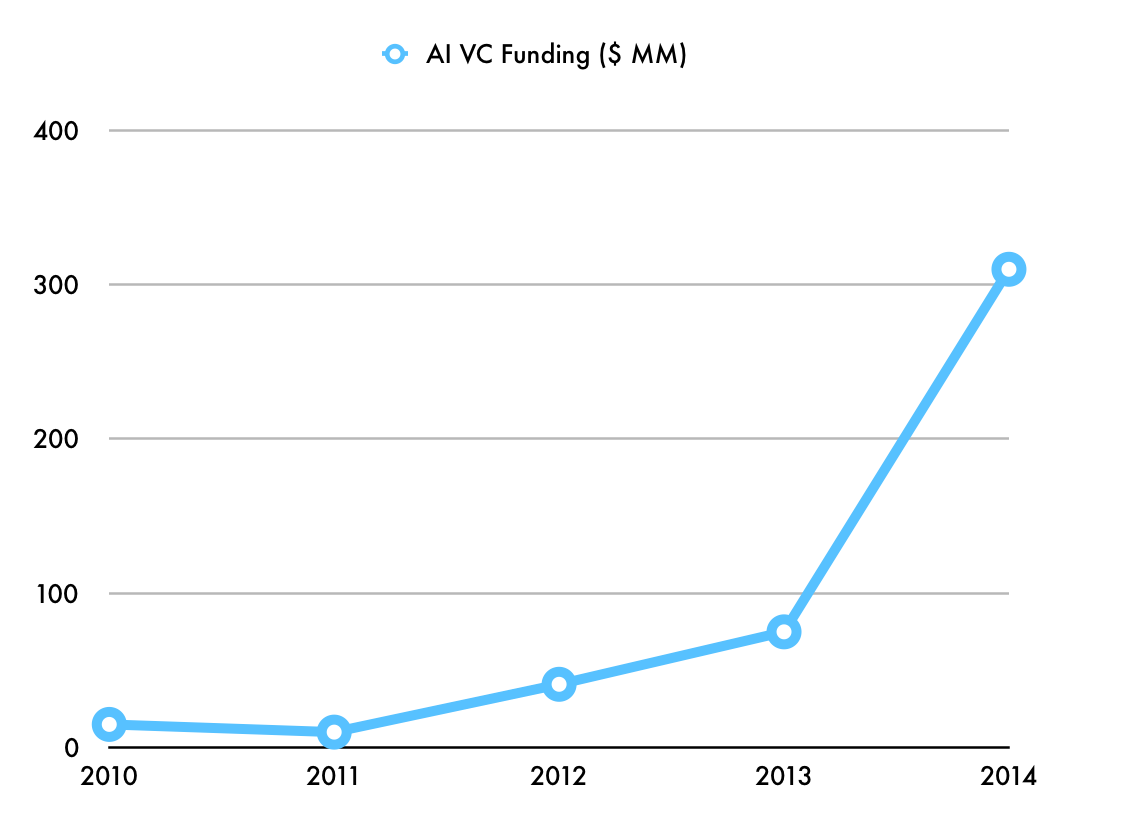

I agree with Azeem Azhar when he draws a parallel to how the boom in machine vision capabilities triggered a wave of AI startups, VC investment, and corporate spending. This is what pure-play AI VC funding looked like at the start of the computer vision boom.

Azeem puts it nicely:

And so today in 2020, we think nothing of high-quality computational manipulation of images. We open our phones with our faces. We track visitors with our cheap consumer webcams. I have a free app that recognises any plant I point my phone at. Capabilities that were not available anywhere in the world a decade ago are now too cheap to meter. Autonomous vehicles are dependent on these breakthroughs to navigate the roads.

If GPT-3 and natural language processing follows a similar path to machine vision, we can expect rapid improvements in applications that use text in the coming 3-5 years.

This matters.

Use-cases

What would a flurry in investment in NLP mean?

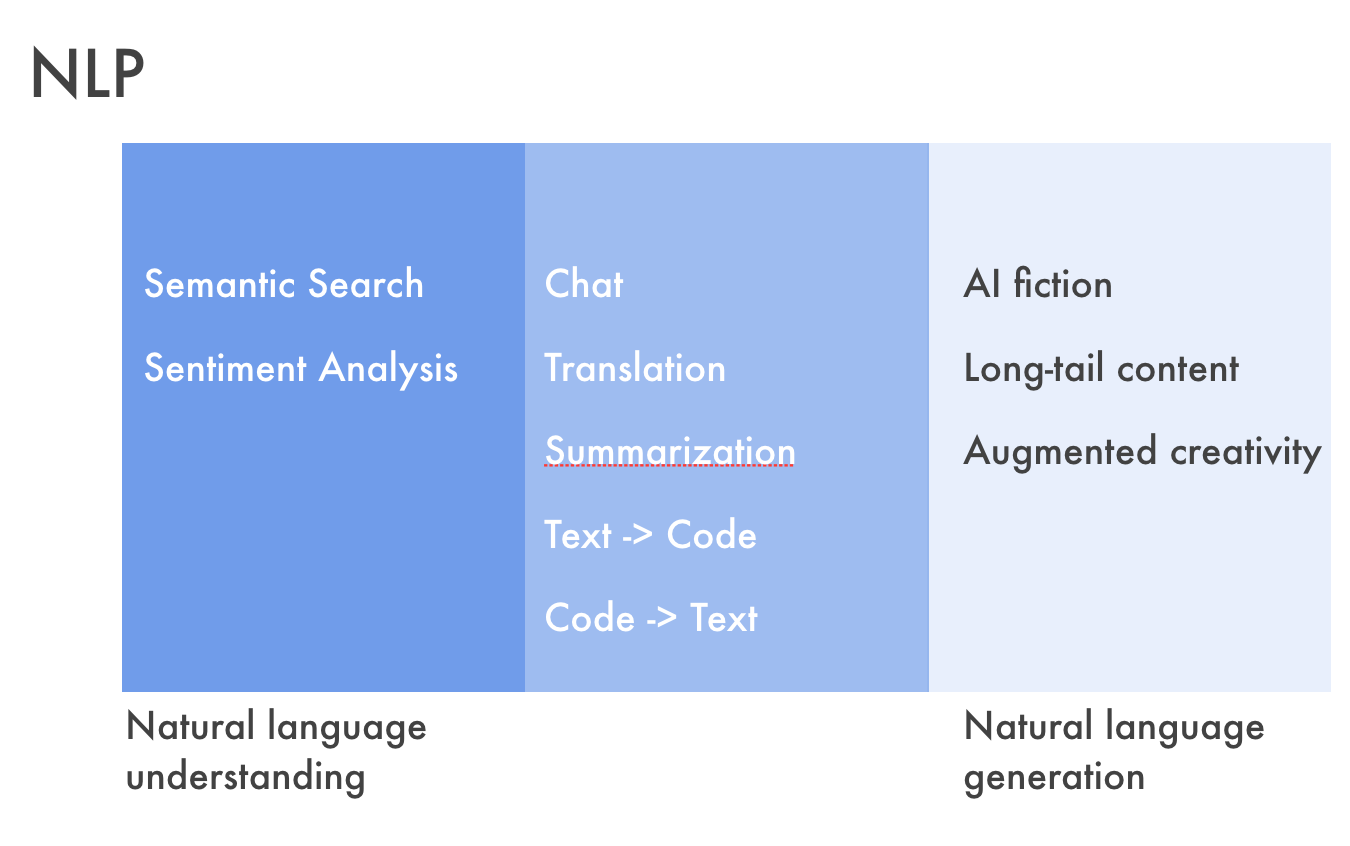

For a start, we can expect a proliferation of standard NLP use-cases, driven by performance improvement and increased accessibility.

These NLP tasks are not new; they’ve been around since the ‘80s. Most of us use things like semantic search, machine translation and chatbots on a regular or semi-regular basis. Sizeable improvement would mean greater adoption and potentially lead to a change in who provides these services. For example, could dramatically improved semantic search built on a pay-per access AI layer loosen Google’s grip on Search?

There’s also the downstream implications for the use of Voice. While usable, Voice today remains clunky. Filling in the many gaps in Siri’s and Alexa’s knowledge would go a long way to instilling confidence in the user, and spark increased usage of voice in the first place.

Then there’s the impact on content generation. Who’d bother writing when an AI can do it for you? Well, me for a start. Plus a myriad of others far more talented. Where AI content generation has real potential to disrupt is at the lowest end. The real losers here will be long-tail, low-end content generators - the WikiHows and below.

The greatest potential as I see it, however, sits outside these well-trodden, general paths. Relaxing the need for massive amounts of training data shifts what use-cases NLP can be pointed toward. Bringing NLP to neglected, unattractive corners of commerce would be a game-changer for areas such as financial reporting, technical writing, and medical reporting. Augmenting the capabilities of readers and writers shifts how this type of content is created and consumed … and by whom.

Finally, there’s the second order implications of mass generation of AI text. Deep-fakes aren’t going away anytime soon and so authentication of content just became more important.

Interesting Reading

GPT-3 Creative Fiction. Gwern puts GPT-3 through its paces, finding that it does not just match his fine-tuned GPT-2-1.5b-poetry for poem-writing quality, but exceeds it, while being versatile in handling poetry, Tom Swifty puns, science fiction, dialogue like Turing’s Turing-test dialogue, literary style parodies. This longread records GPT-3 samples generated in his explorations, and thoughts on how to use GPT-3 as well as some discussion on its remaining weaknesses.

AI & the word. Azeem Azhar muses on the ascendance of natural language processing.

Quick Thoughts on GPT3. Delian Asparouhov takes GPT-3 for a spin and concludes that it is a “racecar for the mind”.

Computers do not make art, people do. Art has a long history of evolving in response to new technologies. In the past century, many of these technologies have led to debates and misunderstandings about the role of the artist. Tools that seemed at first to make artists irrelevant actually gave them new expressive opportunities. Technologists who claim that their algorithms are artists and journalists who suggest that computers are creating art on their very own. These discussions usually betray a lack of understanding about art, about AI, or both.

As always, I’d be truly grateful for any feedback on this newsletter; what, if anything, would you like to hear more about.

I would genuinely like to hear how you’re doing.

Take care,

Simon